Black History Month may be over, but for black women (and those who love them), the party’s only half-over, because March is Women’s History Month—and ain’t I a woman? Nestled at the intersection of blackness and womanhood, we know black women’s histories are rich and varied; we may not be anyone’s mules, but we are arguably the backbone of the United States and myriad social justice movements within it. Historically, ours were the hands that raised not only our own families but generations of America’s leadership. Now, we are one of the most powerful voting blocs in the country.

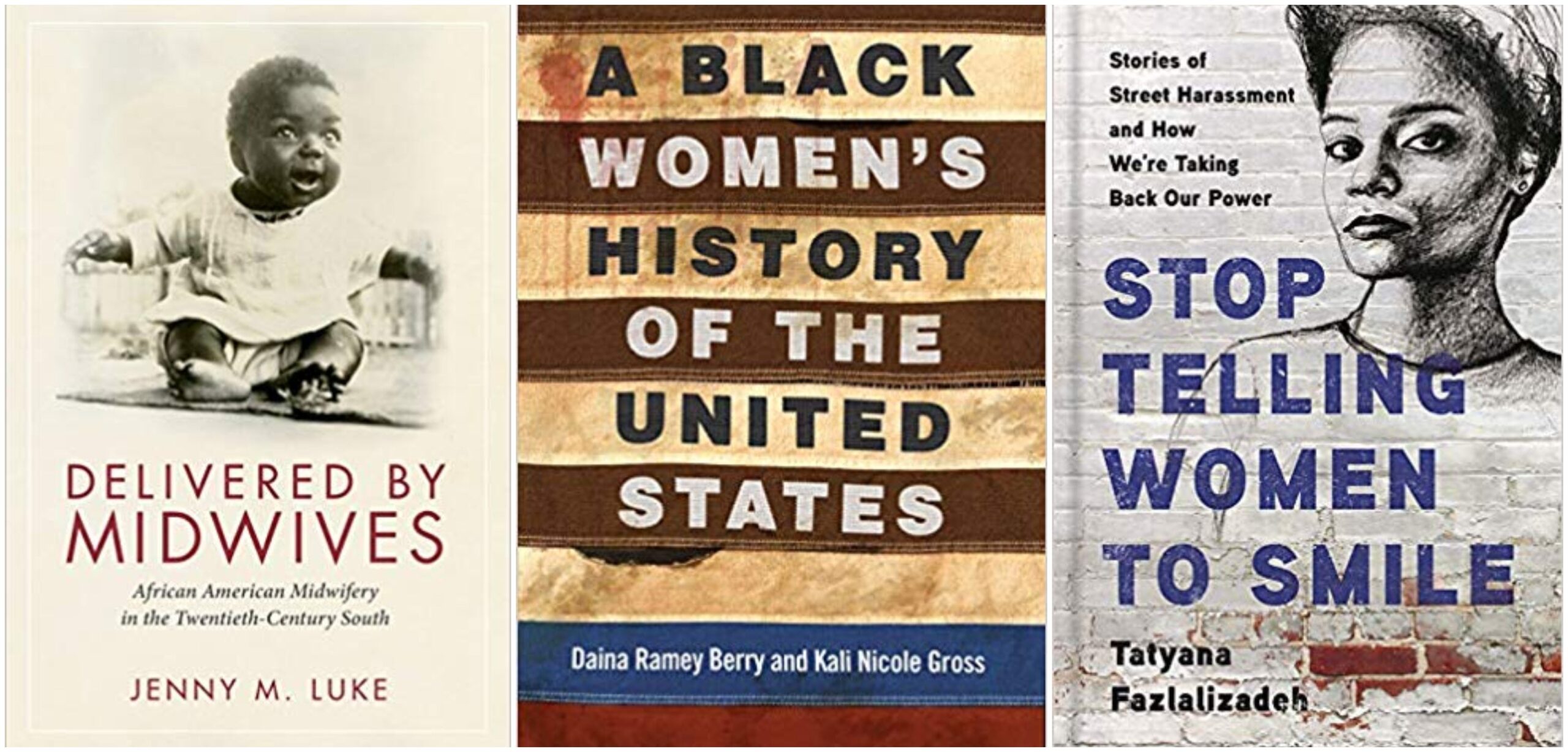

Black history is American history; similarly, black women’s history is women’s history. With that in mind, we’ve compiled a collection of new (and new-ish) books that celebrate the impact, influence, and experiences of black women in America—whether as midwives in the 20th-century South or senior advisers in a new-millennium White House. From clapping back at street harassment to declaring black girls’ lives as sacred as any other, these books center the black female experience as central to the American experience…and because we’re both in it and of it, we’re entirely here for it.

Suggested Reading

Straight From

Sign up for our free daily newsletter.